Technical SEO is usually for big companies undergoing major website changes. For example, when a company with heavy traffic volume such as Norstrom changes its website structure by migrating from HTTP to HTTPS, technical SEO is a must.

This ensures that they don’t break their website during site migration and risk getting losing rankings on search engines. For enterprise-level websites, technical SEO is mandatory to minimize loss of traffic. Usually, companies hire an expensive SEO agency to handle this for them.

But that doesn’t mean technical SEO is only applicable for big brands operating a huge technology stack.

Blogs and small businesses can benefit greatly from technical SEO too. For bloggers, here are 3 important technical SEO tips to help you get better rankings:

*Note: This blog will be frequently updated, so do check back on this post for more actionable tips on technical SEO for Bloggers.

1. Fix Broken Links

Broken endpoints are dead links. This happens when somebody tries to find a URL on your page that no longer exists.

It looks something like this –

Why are broken links a problem?

Broken links hurt user experience, affect baclinks and search engines from crawling your website properly. Broken links or dead links return a 404 status code.

Nobody creates broken links intentionally. Links become broken when these things happen:

- URL typo: when you or somebody makes a mistake typing your URL into the browser. This is out of your control, so we can’t do anything about it.

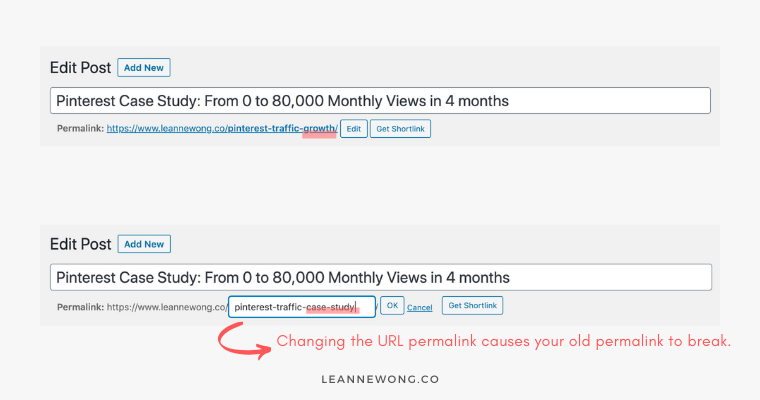

- Changing the URL name. This is when you change/update the URL of your blog post or page (or “permalink” in WP). When you change your URL permalink, you are creating a new URL. That old, previous link becomes broken because it no longer exists. So if that old URL had been ranking on Google, then sadly it becomes a dead link.

When I change the URL of /pinterest-traffic-growth/, that page no longer exists with that old URL. Because it has changed to a new URL, /pinterest-traffic-case-study/

Every page that had previously linked to /pinterest-traffic-growth/, will be linking to a broken page. Because that page no longer exists.

Another example, if you have Pinterest and created pins linking to an old URL, all those pins are pointing to a broken page. So all my pins linking to the old URL /pinterest-traffic-growth/, effectively points to a broken page now.

Fix broken links by updating your old URLs to the new URL or implement 301-redirects.

You can fix broken links by simply:

- Updating all pages / social media posts that contain a link to your old URL, and replace it with the new URL

- Install a 301 redirect plugin

What are 301 Redirects?

For those who want to learn a bit more about 301-redirects. The 301 refers to a response code. The Redirect is just as it sounds, sending visitors who are visiting a specific page to another page instead.

In the above screenshot, we see that a 301-redirect has been implemented:

When we access the page https://www.marimekko.com/eu_en/accessories, it redirected us to https://www.marimekko.com/eu_en/accessories/all-items

See, that redirected URL is the final URL. Because the first URL https://www.marimekko.com/eu_en/accessories, is probably an old page (deleted and does not exist anymore)

and after they’ve created the new URL https://www.marimekko.com/eu_en/accessories/all-items, they implemented a 301-redirect as a proper content retirement strategy.

Now whenever you access the page https://www.marimekko.com/eu_en/accessories, you won’t get a “Page Not Found” broken link (status code 404), but a valid page that is redirected permanently (status code 301).

On WordPress, here are a few plugins that can do the job –

Case Study: Auditing Finnish Design House Marimekko

Now, if you have been blogging actively for more than 6 months, there is a very high chance you have broken links on your site.

You might have changed the permalink of old blog posts and forgot to replace external links to point to the new URL or set up any redirects.

In the following section, we will use the Finnish brand, Marimekko to run a simple crawl to audit their site for broken links.

Step 1: Use ScreamingFrog to crawl your website.

This is a free tool and can crawl up to 500 URLs in the free version.

Step 2: Find URLs with status code 404

- In this example, we are using the website https://www.marimekko.com/ to illustrate how to audit broken links. First, paste the entire domain in ScreamingFrog

- Next, click on the “Response Codes” tab. Third, filter to “Client Error (4xx)”. These are URLs on the Marimekko site with the status code 4xx. Look for 404.

- Next, click on the identified 404 URL and click on the “Inlinks” tab at the bottom.

- Finally, check the “From” tab which shows you from which page is pointing to the broken link. You have to go to the “From” pages and update/replace the broken link with a new URL which is valid.

- To URL: In this example, we see that the broken link is https://www.marimekko.com/eu_en/napitus-piccolo-shirt-blue-pink-047183-775

- From URL: And the page linking to that broken link is https://www.marimekko.com/eu_en/inspiration/seinabo-sey

Step 3: Check on your website where that broken link is

Now, this is the final step to fix the broken link found on your site. We’ll head to the “From URL” (https://www.marimekko.com/eu_en/inspiration/seinabo-sey) and find where the broken link resides.

And from inspecting the page, we found that the broken link was in the “Napitus Piccolo shirt” hyperlink which is pointing to an old, broken page.

Clicking on that hyperlink takes us to the broken page which we found earlier on ScreamingFrog.

There you go!

That’s how you can crawl your own website and find which pages on your site have broken links.

2. Optimize Internal Links

Internal linking is one of the most common technical SEO issues and it is also the easiest to fix. This happens when there are no other pages on your site hyperlinking to a page.

Lack of internal links leads to a problem called, ‘orphaned pages’.

If there are pages without any internal links, it makes it harder for Google to find them.

What are orphaned pages?

Orphaned pages have no other pages on your website linking to it. Literally, orphaned.

So it becomes very difficult for users or Googlebot (web crawler) to find it because there’s no pathway to your inner pages unless they typed that specific URL address directly into the browser.

This often happens when we create new content without linking our old, existing content to it, or vice versa.

Imagine pages on your website that are not connected to any part of your main pages, they are like obscure pages on your site that live alone.

Optimize internal links to improve site architecture

With a better site architecture, people are better able to locate all your pages and this helps SEO too!

So make sure you have at least 3-4 internal links in every article that you publish.

For example, in my article about How to Start a Blog and Make Your First $1K, I add at least 3 internal links to articles that are related to my content.

Make sure that you keyword optimize your anchor text too! Avoid using zero value anchor text like “learn more” or “click here” when linking to your own articles.

I tend to use the entire blog post title or the primary keyword of my articles as the anchor text. It helps people see what the linking page is about, and also another opportunity to increase keyword density.

3. Add an XML Sitemap to Improve Crawlability

Crawlability is about discoverability. It refers to search engines’ ability to crawl and find content on your page.

One of the easiest and beneficial steps you can do for SEO is to add an XML Sitemap to your website.

There are billions of webpages on the Internet and Googlebot is trying its best to find and index every webpage.

Googlebot does this by following links on webpages. They go from link to link, bringing information about each webpage they found back to Google’s servers.

To rank well on Google’s search results, we just have to help Google find and crawl our pages faster and better.

You can check if your website has an XML sitemap by adding /sitemap.xml to the end of your domain.

An XML sitemap is for search engines to help web crawlers find and index your content.

What is an XML Sitemap?

An XML sitemap is a file that lists the web pages on your website.

It does two things:

- Telling search engines about the structure of your website and the important pages that should be crawled and indexed.

- Includes information about each URL, such as when it was last updated, how often it changes, and how important it is in relation to other URLs on your site.

How to create an XML Sitemap

Most website CMS (WordPress, Squarespace, etc) enable you to create an XML sitemap easily. If you are running on WordPress, Yoast SEO plugin can create an XML sitemap in under a minute.

Some useful articles on XML sitemap for each CMS:

For Squarespace users

For Wix users

For Joomla users

For Drupal users

Hope you enjoyed this article! Questions? Drop your comments below!

8 people reacted on this

These are such great tips! SEO is a beast!!

I use the Blogger platform. Would these tips still be the same? I still have a lot to learn about SEO!

This is so helpful! SEO is such a tangled web for me and this is such a good breakdown. Thanks for sharing!

Thank you Maryal!

I need to dedicate some time to fixing my orphan pages – I didn’t even know that was a thing! Thanks for your suggestions Leanne, so helpful as always!

-madi xo | http://www.everydaywithmadirae.com

Happy to help, Madi! Cheers.

So many informative things here. This post helps me a lot with my SEO and I know for sure a lot would benefit from this post as well

Thanks so much Krizzia 🙂