Google plays a significant role in how you perform online. As the largest search engine in the world, it monopolizes how information is found and searched for.

The higher you can rank on Google’s search results, the more visible your website will be. That means more organic traffic (that’s free) and more qualified visitors to your website.

Though all that won’t matter if your website is not indexable. Because if your website or important pages on your site is not in google’s index, it won’t show up on Google’s search results.

So you want to make it easy for Google to find you. Make sure your website pages, blog content, images, videos, and online content is indexable.

This guide explains what is Google indexing, how to check if your website is indexed on Google, and ways to get Google to index your website faster.

94-point SEO Audit Checklist

Want the full version of this post with 94 points to audit your website for better SEO and higher rankings? Download my free SEO Audit Checklist here.

Content List

- What is crawling and indexing on Google?

- How to get Google to index your website?

- Why does it matter if Google cannot index your website?

- How long does it take for Google to index new or updated content?

- Does Google prioritize fresh content?

- Step 1: Check if your website is indexed on Google already

- Step 2: Create an XML Sitemap

- Step 3: Update your XML sitemap with new pages

- Step 4: Submit your XML Sitemap to Google

- Step 5: Inspect any individual page URL and request indexing

- Step 6: Use Internal Links on Your Website

- Step 7: Add internal links in the navigation menu and footer

- Step 8: Submit your website to directories

- Step 9: Check for Google crawl errors regularly

- Step 10: Create unique and SEO-rich content

- Step 11: Update old pages on your website

- Step 12: Build backlinks and improve domain authority

- Step 13: Promote social sharing of your content

What is crawling and indexing on Google?

After doing a search, Google turns to its index (database) to display the most relevant result to answer your search.

Web crawlers or bots (eg: Googlebot) browses the world wide web for the purpose of indexing. If your website is not on Google’s index, then it won’t show up on its search results.

Crawling is the process of finding links – new or updated pages on a website. Google’s web crawlers usually crawl your site first before adding it to its index.

Google Indexing means Google’s web crawlers discovered a page for the first time and added it to the Google Index. Learn more about how Google crawling and indexing work.

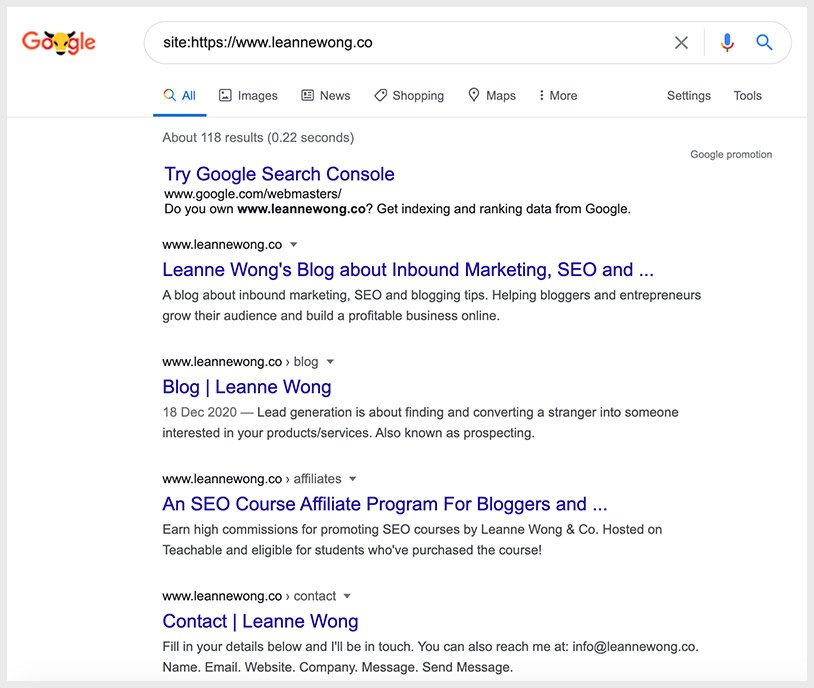

The easiest way to check what pages on your website are indexed on Google is to use the site search operator.

How to get Google to index your website?

Google will try to find and crawl every page it can discover on the web. If your website pages are not indexed on Google yet, it may just take some time to get updated on Google’s search index.

In some cases, it may be blocked on the robots.txt file. More on that later.

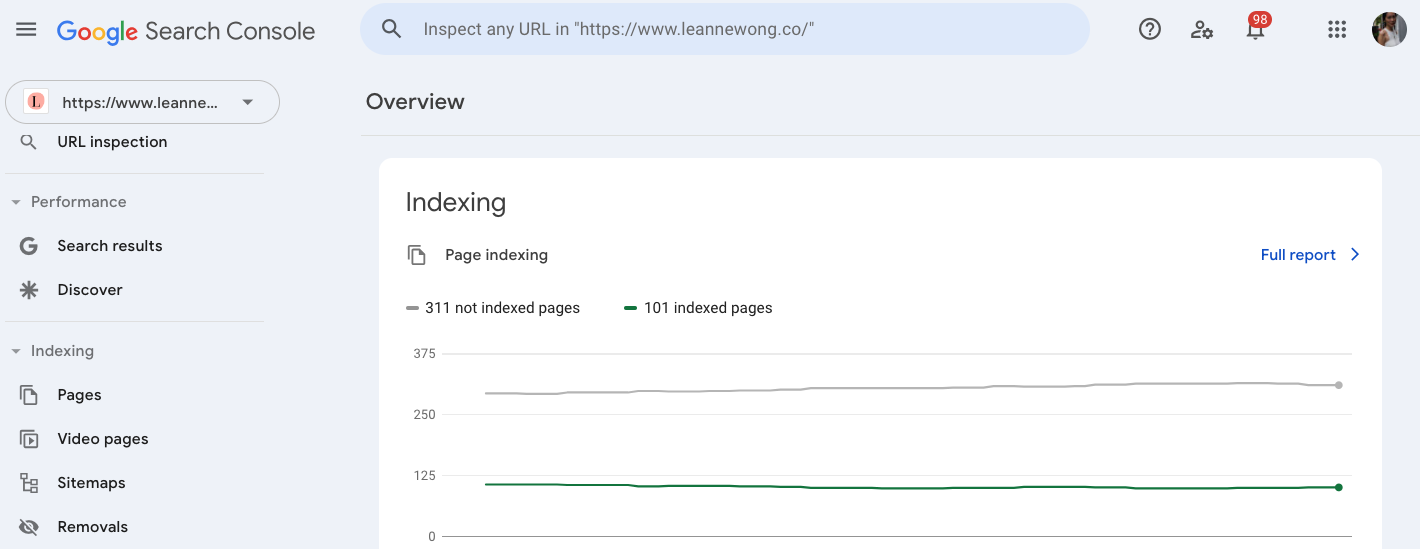

One of the best ways to check Google’s indexation of your website is with Google search console.

As the site owner, you can create a Google search console account for your domain. You can also link it to Google Analytics.

The Index Coverage report shows you the indexing status of all URLs that Google has found on your site.

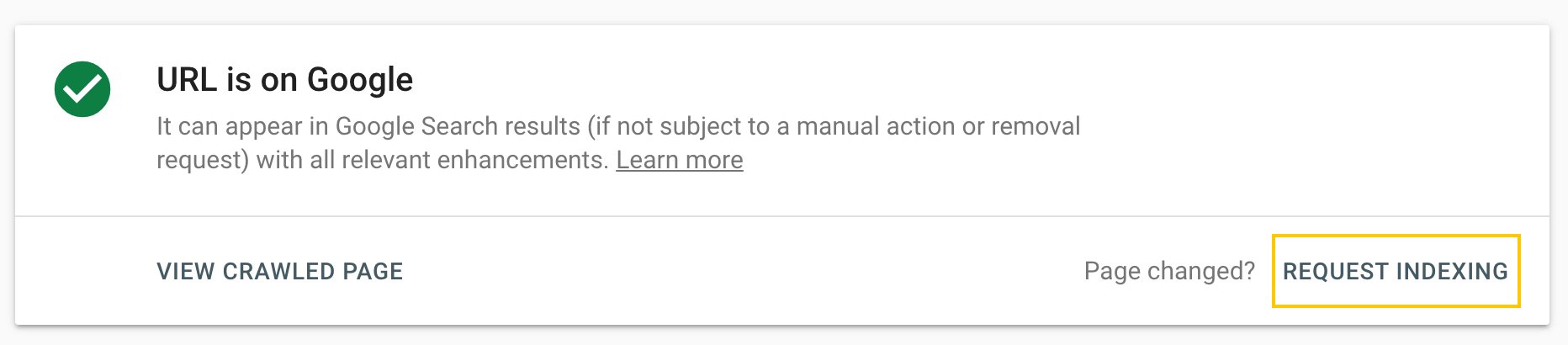

Ask Google to crawl your page and request indexing

When you have published a new blog post, this is an easy way to help speed up the indexing process.

- Step 1: Go to Google Search Console

- Step 2: Click on your website property

- Step 3: Click on URL Inspection

- Step 4: Paste the URL you want Google to index into the search bar

- Step 5: Wait for Google to check the URL

- Step 6: Click the ‘Request Indexing’ button

How long does it take for Google to index new or updated content?

Google’s John Mueller said it can take “several hours to several weeks” for Google to index new content or updated content.

The indexing process can take longer if Googlebot is busy doing other things, such as indexing more important sites. Or if there are technical issues in your website that make crawling and indexing difficult.

Ensure that your website is optimized so Google prioritizes the most important content for indexing.

Internal Linking

One of the possible reasons when pages are not indexed is that Googlebot could not find them during the crawl.

Poor internal linking structures are often the cause.

A great way to speed up Google indexing is to add internal links. Such as linking to the newly published blog post or page from the homepage.

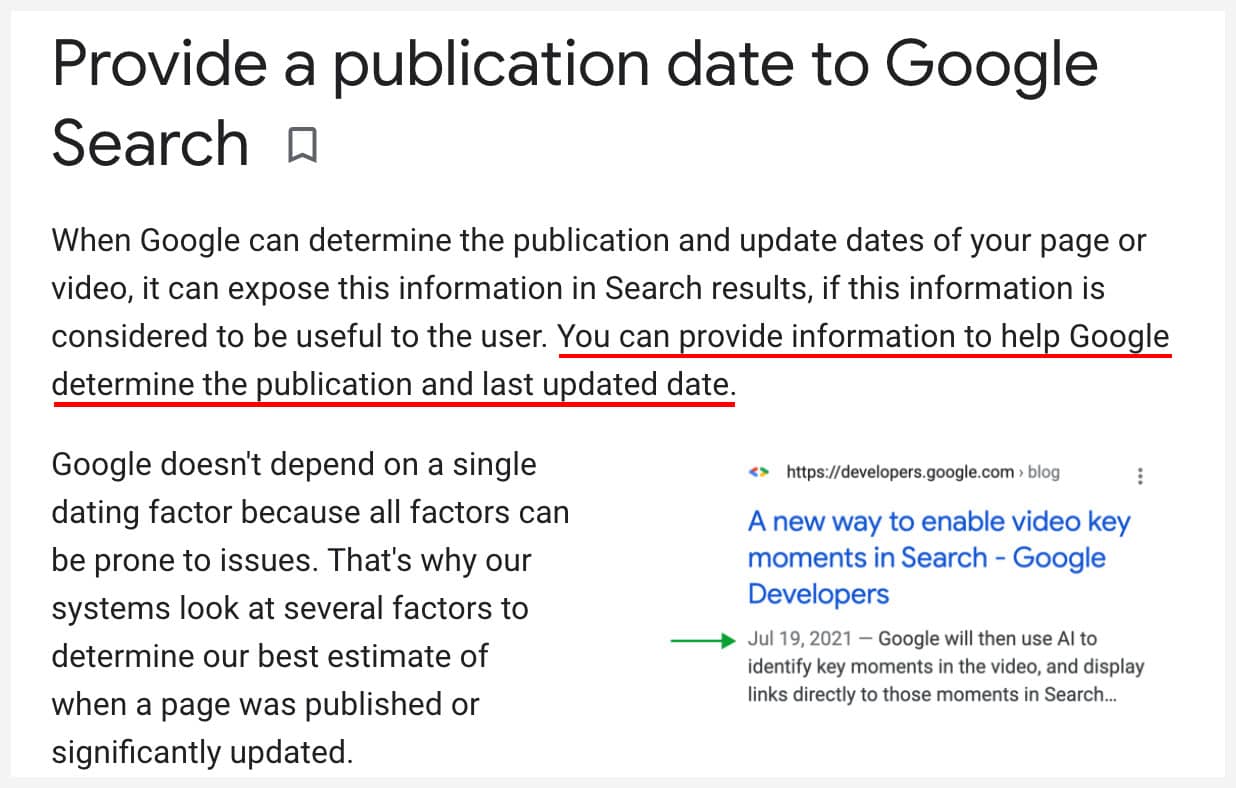

Does Google prioritize fresh content?

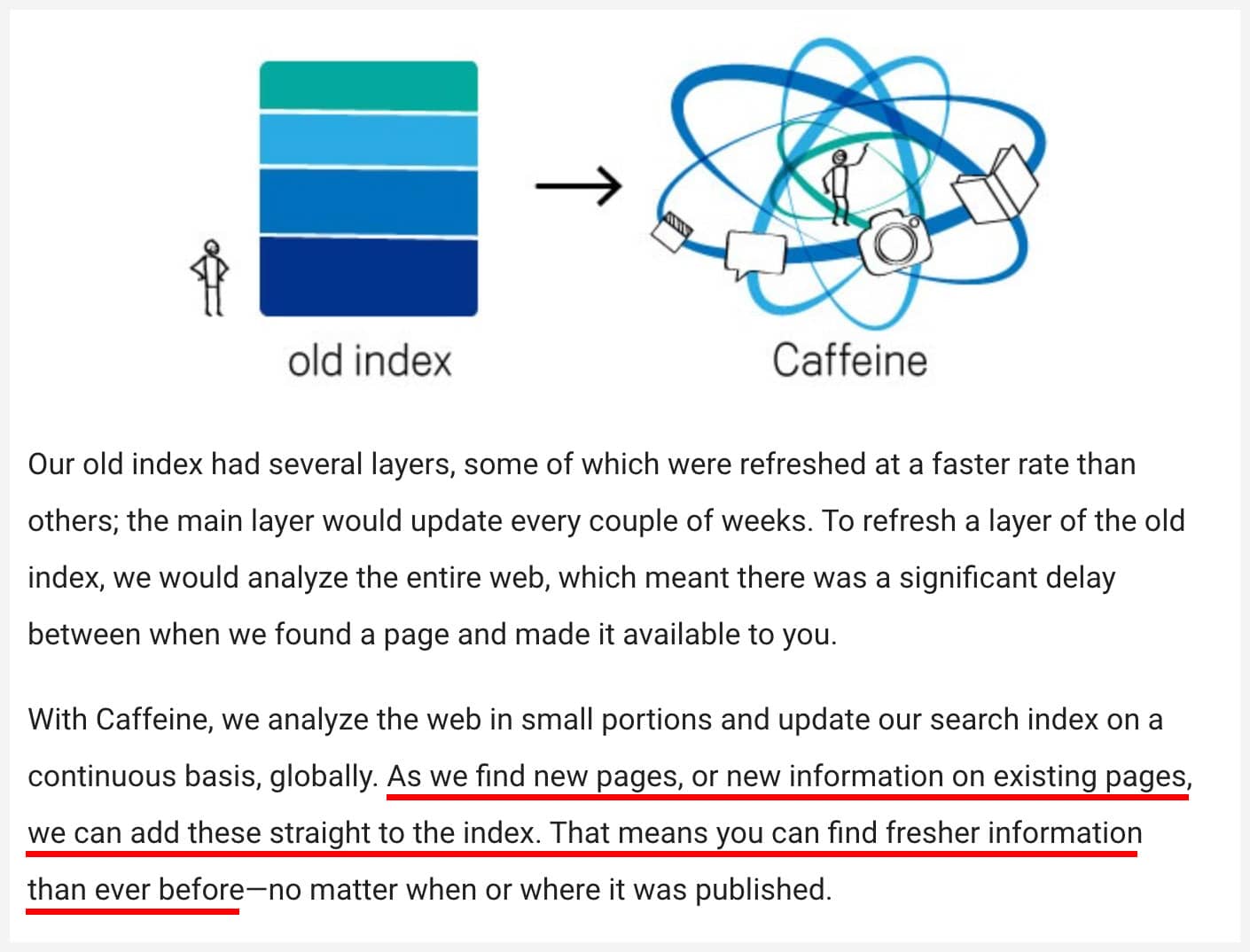

Google has said here that it wants to give users fresher and more recent search results.

This was the Caffeine web indexing algorithm update that allowed Googlebot to crawl and index the web for fresh content quickly and at scale.

Building upon the momentum from Caffeine, today we’re making a significant improvement to our ranking algorithm that impacts roughly 35 percent of searches and better determines when to give you more up-to-date relevant results for these varying degrees of freshness.

So yes! It is a good practice to update your content. Because Google makes it a priority to find and index updated content.

The most recent published, rewritten, or updated content is appreciated as they are more likely to be accurate – better results for users.

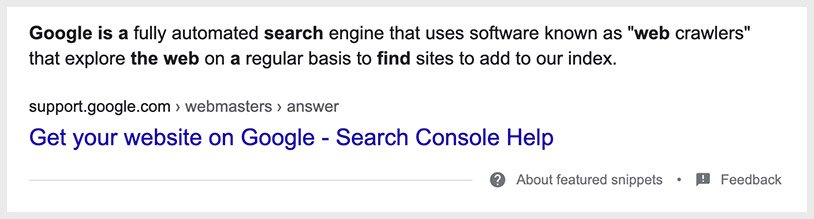

Also, the last updated or published date could appear on Google search. This information ranks on the search results next to the meta description which influences CTR.

Robots.txt file

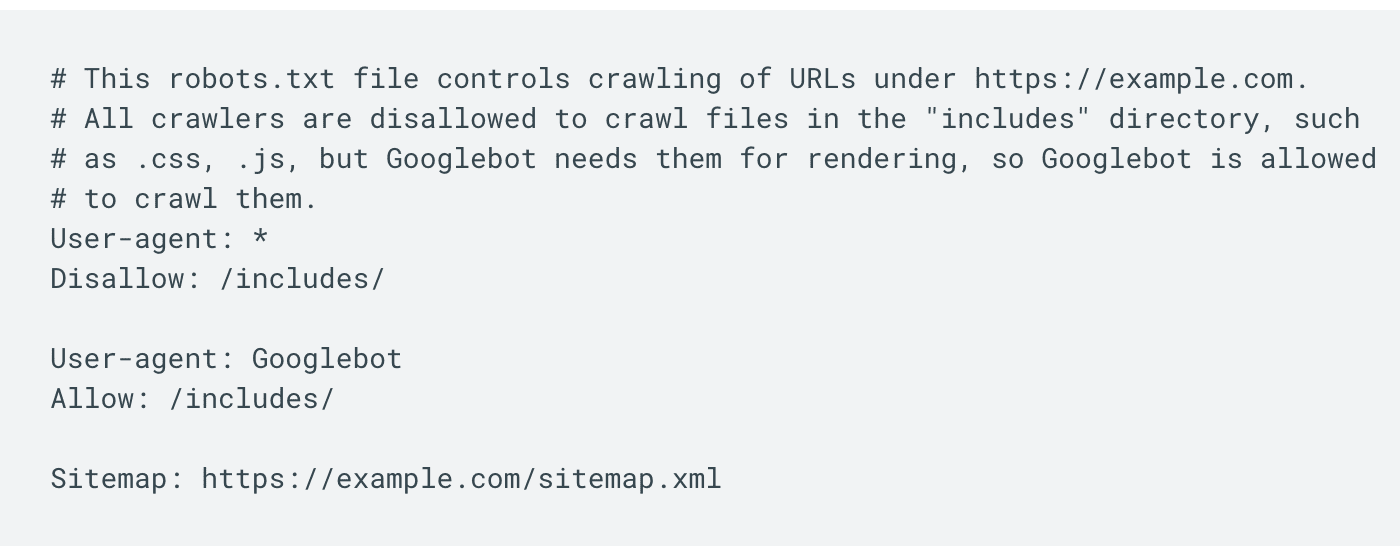

The robots.txt file tells search engine web crawlers which pages on your site the crawler can access.

It is primarily used to manage web crawler traffic to your site. For instance, if you think your server will be overwhelmed or overloaded with requests from the crawler. Learn more about Googlebot crawl rate.

It can also be used to optimize crawling. So if there are unimportant pages on your site that you don’t want crawlers to find, you can instruct the crawler not to crawl it.

For instance, if you want to prevent certain web documents such as PDFs or content types like images, videos or audio files from appearing on Google’s search results.

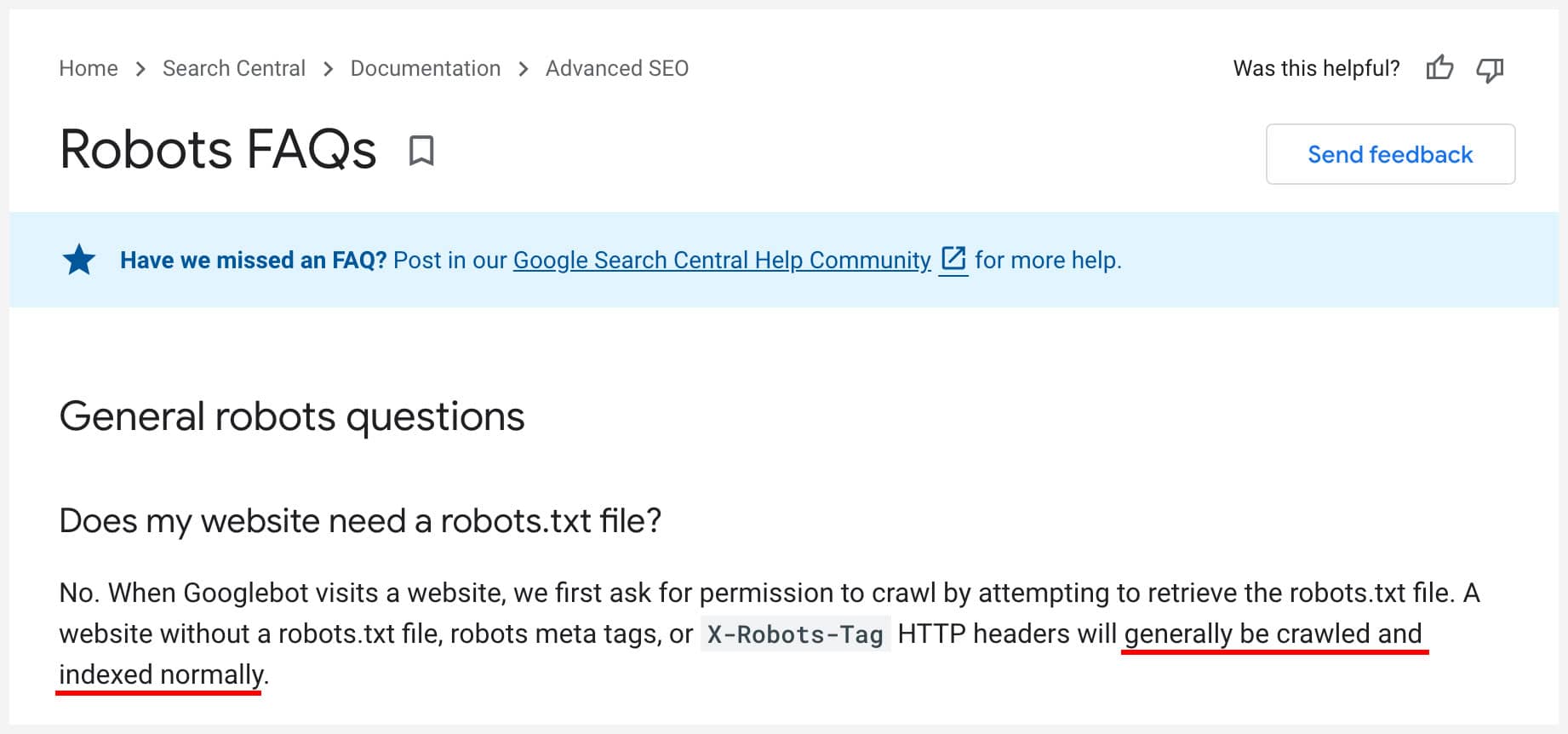

Do you need a robots.txt file?

Google says nope! If you don’t have one, Googlebot will crawl and index your website normally.

How to block crawlers from accessing sections of your site

You can specify specific crawl block rules in the robots.txt file. Here’s an example from Google:

This essentially tells which all crawlers that are not allowed to crawl the “includes” folder, but Googlebot is allowed to. Lol!

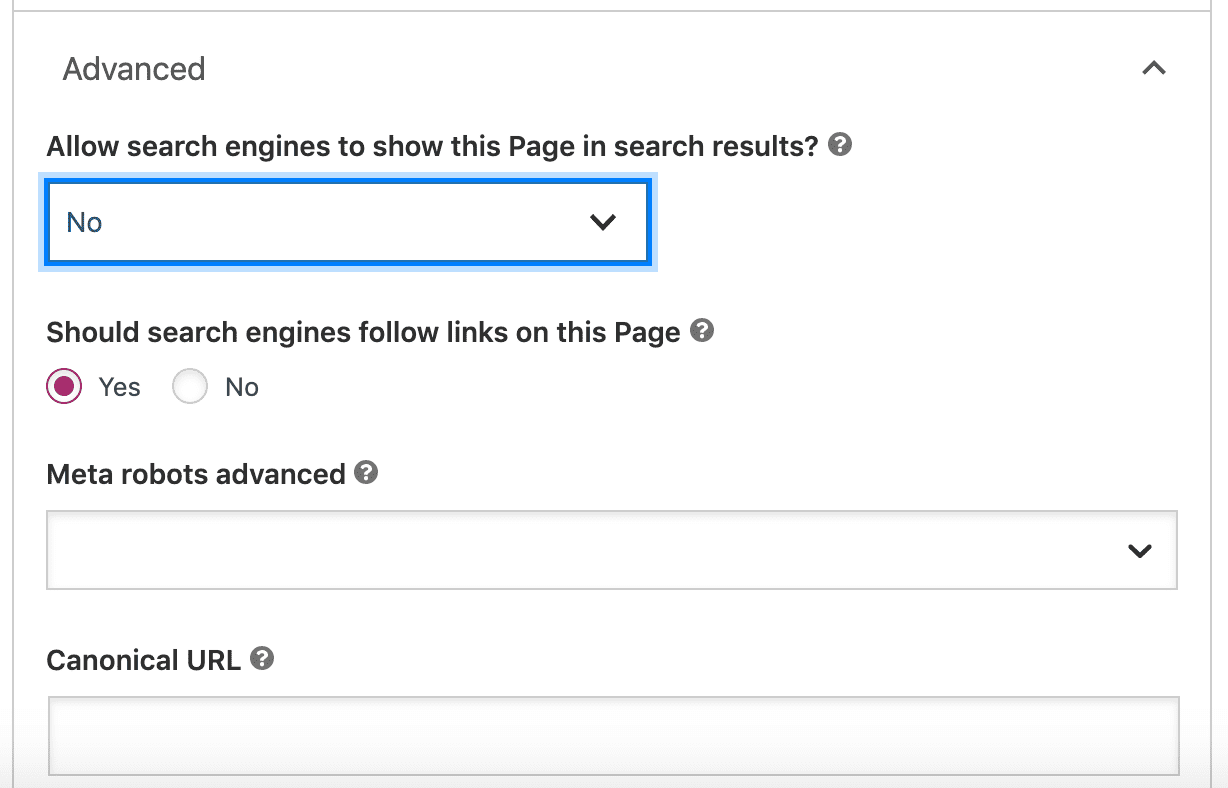

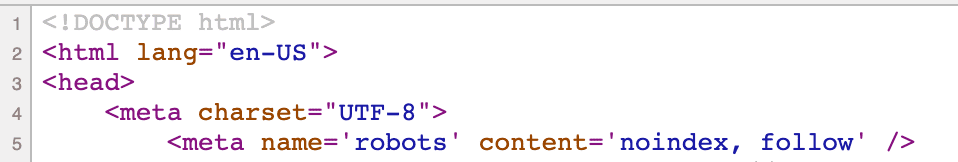

If you want to block specific pages from appearing on the search results, you can use the noindex.

This can be implemented by including the noindex meta tag or header in the HTTP response.

When Google crawls a page and sees the noindex tag or header, Googlebot will drop that page from Google search results entirely.

Using noindex is useful to block access to your site page by page.

WordPress Yoast SEO

On WordPress, the Yoast SEO plugin allows you to exclude any post or page from appearing on search results. So this is how you can implement the noindex.

How the Noindex, follow meta tag shows on the source code:

Be careful with editing your robots.txt file as you don’t want to accidentally block your entire site.

The best practice is to keep your robots.txt file clean and simple. Avoid creating any crawl blocks unnecessarily.

Duplicate content

When you have multiple different URLs that point to the same page, Google considers these pages as duplicate content.

In other words, if there are multiple pages with a large amount of identical content, these are considered duplicate pages.

Different versions of the same page such as when another version of the page has parameters in the URL but contains the same content, do not add any value.

Google tries not to index duplicate content, mirror images that are the same, and content that is not useful.

Google’s main goal is to provide the best answer to the user’s search query with high-quality content.

To speed up Google indexing, avoid creating duplicate content. Use canonicals to fix duplicate pages.

Canonical Tag

If you have duplicate pages of the same or similar content, you can consolidate duplicate URLs with a canonical tag.

Such as a printer-version of a page and its regular version.

Product pages that are accessible or linked to by different URLs.

Simply choose the URL which is the original and place it in the canonical tag. This original URL is also referred to as the canonical URL.

The canonical explicitly tell Google which page URL is the original version.

Even if you don’t do this, Google will try and determine the original version and mark it as canonical.

Benefits of choosing a canonical URL

Choosing a canonical URL when you have a set of duplicate or highly similar pages helps with SEO.

- To specify the page that you want to appear on search results. Thie canonical URL is the one that will rank.

- Consolidates link juice from backlinks. The authority from external links can be consolidated into one single, original version. Multiple URLs of the same content can attract backlinks as individual pages. This splits the link juice as it is shared across different pages. Choosing a canonical page accumulates and strengthens the page authority of that page and its ranking ability.

- Optimizes the crawl budget and gets the most out of Googlebot crawling your site. Instead of wasting time crawling duplicate pages, Googlebot can crawl new web pages or updated content.

Why does it matter if Google can’t index your website?

If Google cannot index your website or certain important pages on your website, it won’t show up on search results. That means a loss of traffic.

Your website needs to be indexed so that it can be shown on Google’s search results.

Having your website indexed on Google means that it is stored in Google’s database. It does not mean that it will rank high. That is a topic for another post – SEO Case Study: How to Rank in the First 2 Results on Google.

Normally, you don’t have to do anything and Google will find your website and any new content eventually.

The results will show you all the pages on your website that are currently indexed on Google.

If you find certain pages take a long time to be indexed, you can try and speed this up.

Update your XML sitemap with new pages or submit those pages on Google search console for Google to re-crawl.

Both methods are explained in more detail below.

Here are 13 ways to get Google to find and index your website faster.

Step 1: Check if your website is indexed on Google already

The first thing you want to do is to check whether or not your website is already indexed.

Enter your entire domain with ‘site:’ search operator on Google.

The results will show you all the pages on your website that are currently indexed on Google. If you don’t see certain pages that you want to be indexed, then this will mean that those pages are not yet indexed.

If it’s not indexed, it is likely still in the process but in some cases, you’ll want to try and speed this up.

Do this by updating your XML sitemap with those pages or submitting those pages on Google search console for Google to re-crawl. Both methods are explained in more detail below.

Step 2: Create an XML Sitemap

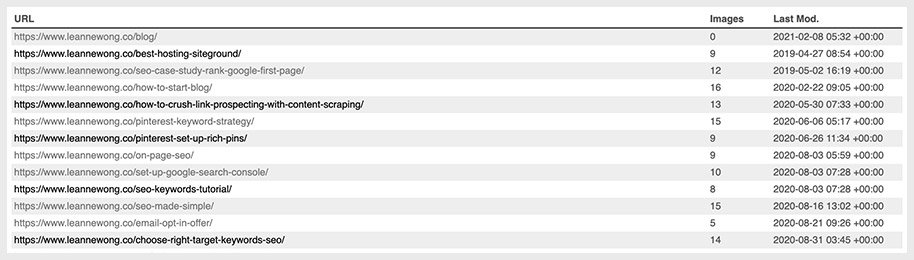

An XML Sitemap is essentially a list of website URLs in XML format. It helps Google to find the most important pages on your website easily and more intelligently.

If there’s a quick win right now for your SEO, it would be creating an XML sitemap for your website. It’s really easy to do and often times automated.

There are two ways to create an XML Sitemap:

- Create an XML sitemap with a plugin

- Create an XML sitemap manually without a plugin

Create an XML sitemap with a plugin

Most websites work on a content management system (CMS) like WordPress, Squarespace, which makes it easy to create an XML sitemap. On WordPress, the Yoast SEO plugin easily takes care of that for you and even automatically updates the sitemap when you publish new pages.

Create an XML sitemap manually without a plugin

If your website is not using a CMS, you can use Screaming Frog to create an XML sitemap. It provides free crawling for up to 500 pages, which is a good start for most website owners.

On WordPress but don’t want to use a plugin? Good news – WordPress 5.5 will automatically create a default XML sitemaps for your website.

Step 3: Update your XML sitemap with new pages

As you publish new pages on your website or update your content, you’ll want to update your sitemap. If using a WordPress site, the Yoast SEO plugin, or the All in One SEO Plugin does the job for you automatically.

You can also re-generate your sitemap again with Screaming Frog and re-submit your updated XML sitemap to Google.

Step 4: Submit your XML Sitemap to Google

After creating the XML sitemap, you can use submit it to Google Search Console. You’d have to create an account on Google Search Console first. Here’s a guide to set that up if you haven’t done so yet.

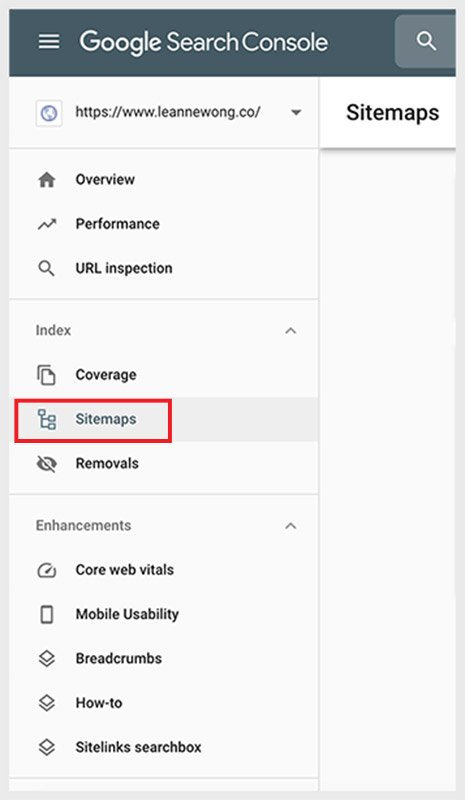

In the sidebar, select your website and click on the sitemaps tab. You’ll want to remove any current XML sitemaps first then upload your updated sitemap.

Do this regularly if you update your website frequently. It will help Google crawl your webpages better and speed up the indexation process.

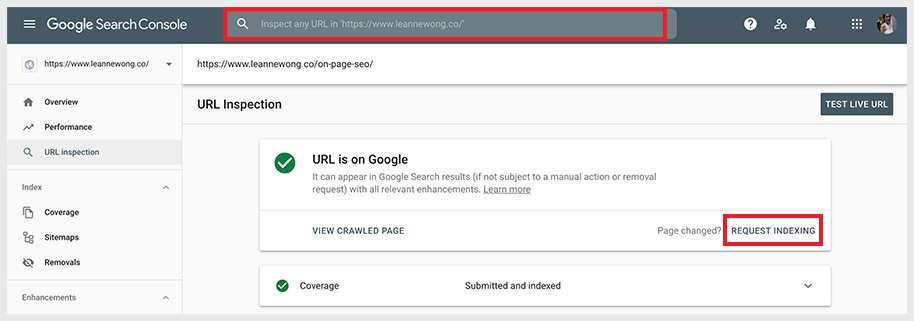

Step 5: Inspect any individual page URL and request indexing

When you make changes to existing pages and content, you can request for indexing and to re-crawl these pages.

Setting up Google Search Console is easy to do if you’ve not already done so.

Head over to Google Search Console > use the ‘Url Inspection Tool’ > request indexing.

You can also double check the indexation status of any page on your site with Google’s URL inspection tool.

Next, we just wait for Google to do its thing and queue your webpage for indexing.

Step 6: Use Internal Links on Your Website

Internal linking is beneficial for SEO. An internal link is a hyperlink from one page to another page on your own website.

They help structure your website content and make it easy for Google to follow these links and find your pages. Below are some ways to use internal linking on your website.

Use ‘related posts’ internal linking on your blog posts

Related posts show users contextual, related content based on the current post. This helps increase traffic to your website as users visit multiple articles on your site.

It’s a quick win to boost internal linking and reduce bounce rate, too.

You can set this up with simple plugins like Yet Another Related Posts Plugin (YARPP), Contextual Related Posts, Inline Related Posts on WordPress.

Add breadcrumbs across your website

Especially for sectional content (product pages, services, articles), breadcrumbs are essential to show a logical flow of content.

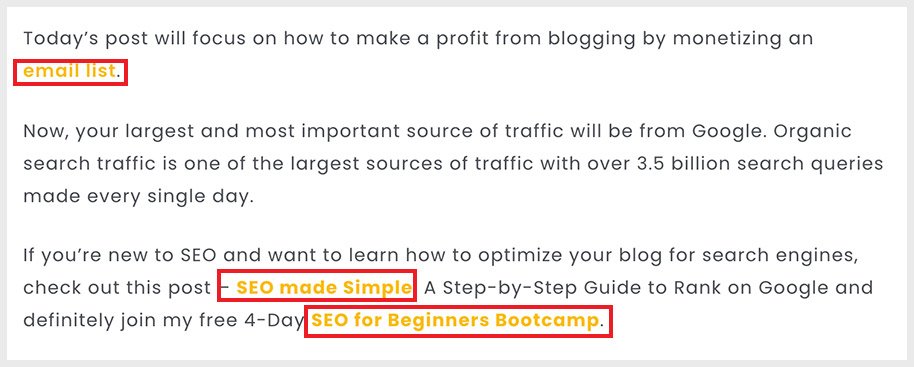

Add text-based internal links manually in the body content of a page

You can also add internal links the simple way by manually adding them in the body content of a page. Such as in your page’s body content.

Make sure to also use keyword-rich anchor text in these text-based internal links.

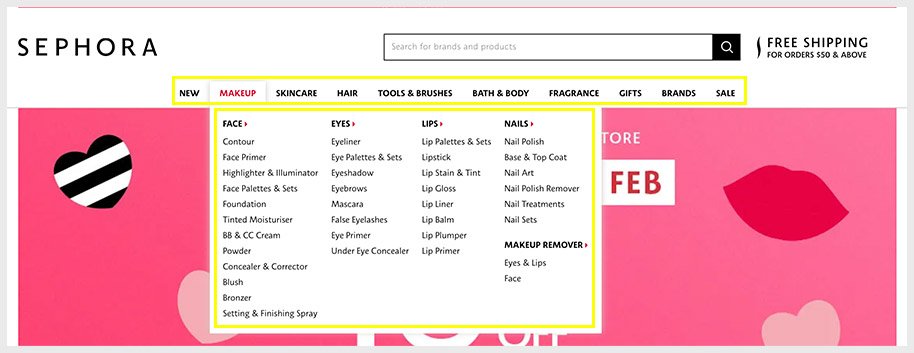

Step 7: Add internal links in the navigation menu and footer

Adding internal links in the navigation menu and footer builds stronger link structures on your website.

E-commerce websites can greatly benefit from a robust internal linking structure as they have a high quantity of products and content.

- Add main product category links

- Add product sub-category links

- Add new product categories and sale category pages

This not only helps search engines find your content better but it provides a better user experience too.

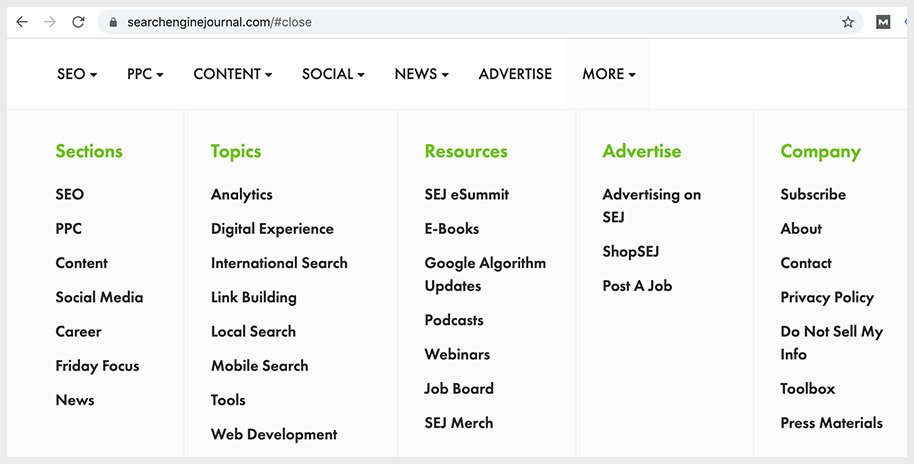

Blogs can greatly benefit from internal linking in the navigation menu and footer as well. A good example is the Search Engine Journal.

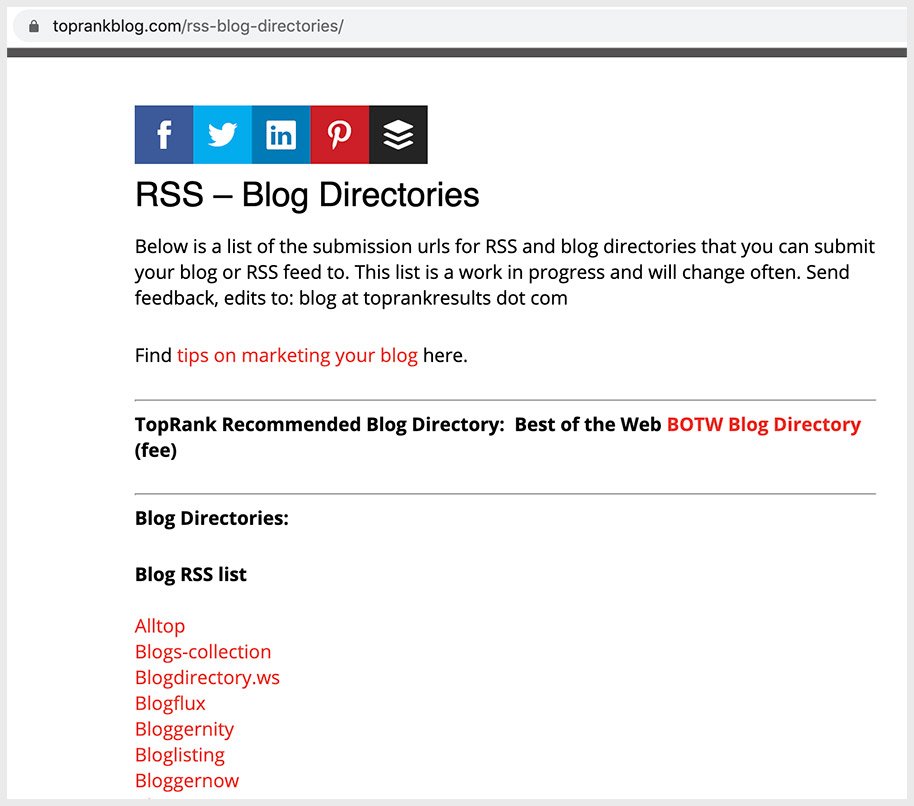

Step 8: Submit your website to directories

Submitting your website to directories has been a traditional way to get started with SEO. By placing your website on authoritative directories, you can build foundational backlinks and get found online.

The idea is if your website is found on other websites, Googlebot and web crawlers can discover your website faster. Since those sites are established already and are on Google’s radar.

Also, your website would be exposed to a much larger audience and that could send referral traffic to your site.

Make sure to only submit to authoritative directories

However, the relevance of directories has been declining throughout the years. Google removed a number of free directories from its index, John Mueller from Google said that directories generally is not relevant for SEO.

In my opinion, directories are still useful as long as it is niche relevant and provides high link value.

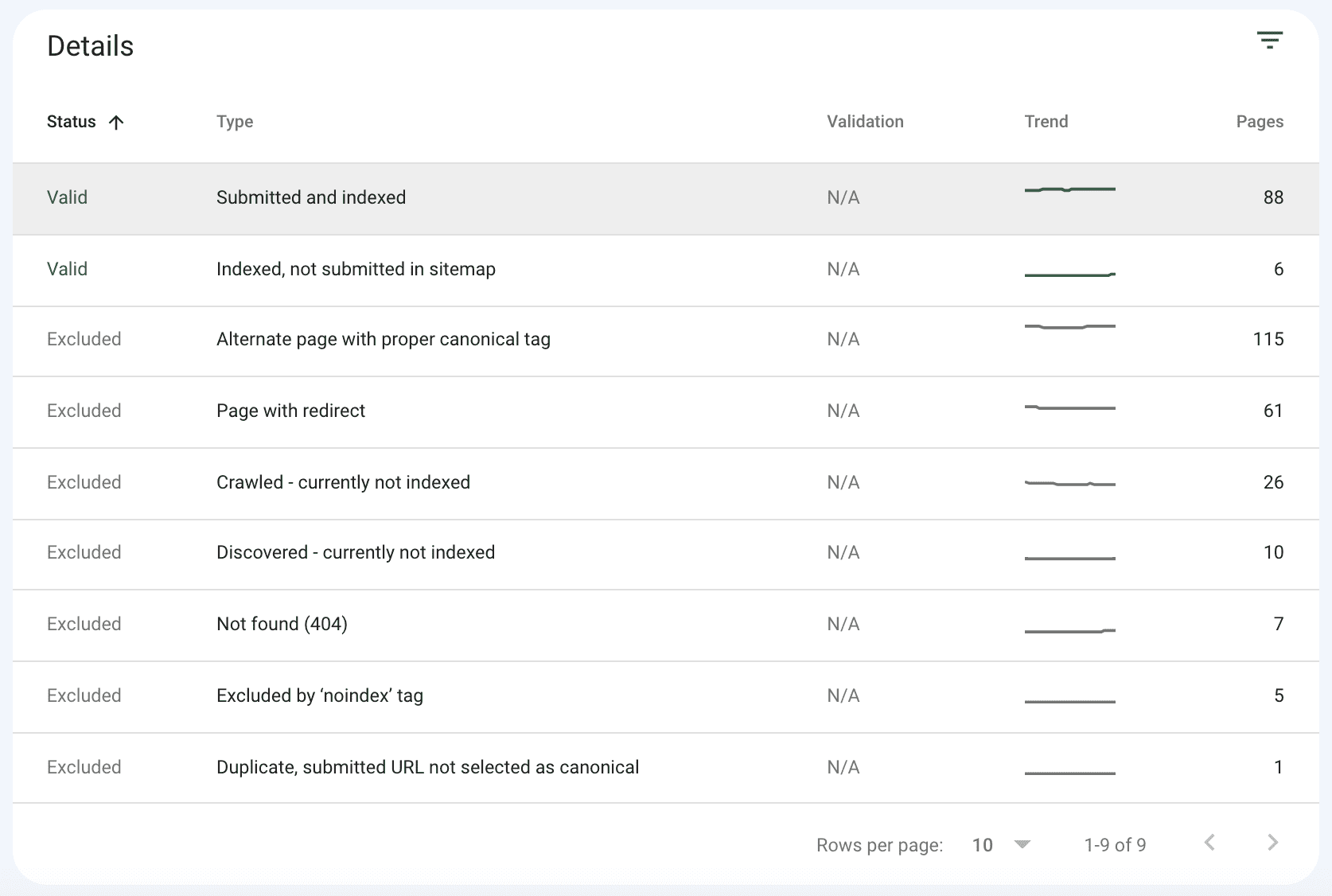

Step 9: Check for Google crawl errors regularly

As your website grows and you publish more content, you want to do a technical site hygiene check regularly. For small websites (less than 500 URLs), doing a check every quarter is fine.

For large websites, I suggest doing a monthly or bi-monthly check. Google Search Console is the first place to check.

Dashboard > Index > Coverage

Error pages

Look out for any Error pages flagged in red. That means these are pages on your website that Google could not index.

- Server error (status code 500-level error)

- Redirect error (redirect chain that was exceedingly long that Google could not index the URL)

- Submitted URL blocked by robots.txt

- Submitted URL marked with ‘no-index’ tag

- Soft 404

- Broken page (status code 404)

The index coverage report will show the indexing state of all URLs that have been visited by Google or have been attempted to visit.

The summary of the visit will also be shown and it’s this that can help you discover what webpages have resulted in problems.

Prioritize fixing the webpages that matter most to get indexed next.

Step 10: Create unique and SEO-rich content

Content that is keyword-optimized and SEO-rich simply makes it easy for Google to rank on search results.

When it comes to creating content, you want to be hitting those common SEO-friendly checkpoints.

- Keyword targeted title and meta description

- Sufficient content on the page to target keywords and satisfy user queries. Long-form content does really well and tends to rank high.

- Avoid creating low quality, thin content.

- Adding alt-text of any images you publish.

- Compressing images and reducing file size.

- Fix broken links and check for crawl errors.

Having a good SEO keyword strategy is the first step to SEO.

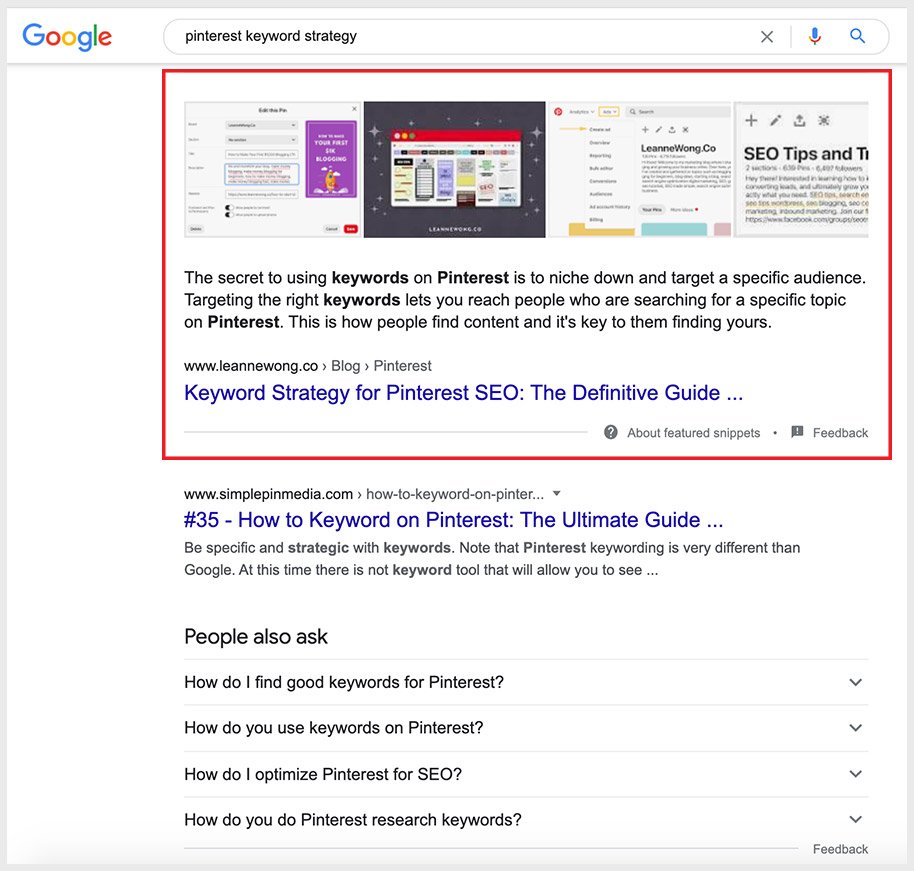

Next, I recommend learning SEO Copywriting Fundamentals and some advanced strategies like ranking on featured snippets to boost your SEO.

Step 11: Update old pages on your website

Google always wants to update and refresh its Index in order to display the most relevant content on search results.

So it is constantly looking for new pages to index, and high-quality content to show users.

Remove low-quality pages so that the more important pages are prioritized for indexing.

Google’s search algorithm sorts the most relevant pages to the search query first. The best results with high domain authority and quality content are ranked at the top of the search engine results page (SERP).

Freshening up your content will improve its relevance and potentially increase rankings.

Old pages on your website can actually have the most SEO value because it has been in Google’s index longer and chances are, acquired a lot of backlinks already.

That means high page rank and domain authority. So spend some time updating your existing content to give it that extra SEO boost.

One of my old articles that was published in 2019 had great SEO results after a round of content updates in 2020. This was after I updated the article with new content, added a few sub-headings and more images.

Now it ranks on a featured snippet on Google!

To learn more about how to rank in featured snippets, check out SEO Copywriting Fundamentals.

Looking for a complete SEO program? SEO Demystified is for you!

Step 12: Build backlinks and improve domain authority

Backlinks tell Google how authoritative and credible a website is. External links from websites act as ‘votes of confidence’ in the search ranking algorithm.

Link signals are a strong factor to boost rankings as Google wants to show results that are credible and authoritative.

Simply put, if your website has tons of high DA (domain authority) backlinks, Google will consider your site as more important than others that do not have a strong backlink profile.

Step 13: Promote social sharing of your content

Social sharing of your content gives your content more legs. That means more shares, more eyeballs, more likes, and engagement on your content.

Increased social media visibility of your content would help Google and web crawlers discover your website faster too.

Start by creating a profile on social media accounts. Not only do you get to share content on social feeds, but you get to place your website domain on the social media platform too. A backlink!

Now, Google has repeatedly said that social signals do not influence search engine rankings. But if you think about it, they certainly have an indirect impact.

Because more shareability of your content increases the chances of other people (and websites) linking to you.

The more present you are in front of your audience, the stronger your online visibility and conversions too.

59 people reacted on this

So much great info on here! I’m fairly new to blogging and hadn’t even heard of Google Search Console, I’ll have to start using it! Thank you for all the tips! 🙂

Oh my! Yes Google Search Console is super useful. Here’s the link to GSC:

https://search.google.com/search-console/about

Google and I have an ongoing dodgy relationship. Sometimes it wants to work with me and my website, and other times it just refuses.

Lol! that happens to most of us!

Wow! I learned something new again. Thank you! I hope I can index my pages faster so I’ll get more traffic.

I just recently get back to blogging and I need to learn more things on how to improve my blog. Glad I found this and indeed it’s a great help. I learn something new today.

Happy to help! Cheers

Lots of great tips! I wasn’t familiar with this and it would definitely be helpful for my website.

Indexing and discoverability isn’t the easiest topic!

This guide is EVERYTHING! Everyone with a website needs this. Not only have you given us all of the information we need to make our sites visible, you’ve broken it down in a way that’s super easy to digest and follow. Love this so much! Thank you for sharing this with us 🙂

It’s my pleasure, Sara! Happy you found the article useful.

Your tips are awesome! I can’t wait to try all of these.

Thank you!

Great tips! I love that you added the screenshots which makes it easy to follow along and understand!

Glad you enjoyed the article!

Everyone who has a website needs to read this. There is so much to being indexed by Google than simple on-page SEO.

Cheers 🙂

This is such important information! I’ve had a blog for years, and it wasn’t until recently that I started to understand at least a little bit about all the things that really go into be indexed. I STILL didn’t know about everything here.

Just learned about breadcrumbing. This is so intriguing.

I’m so glad! 🙂

These are great tips! I’ve been struggling with almost everything from this list. Bookmarked for future reference!

Oh no! I hope it’ll get easier! Step by step.

Nnniiiccceeeee….this should occupy me, for the next few days, thank you, Leanne!

Great tips! I need this for my blog since I maintain it daily. I want to be discovered more too. 🙂

These are such great tips! Particularly, submitting xml and internal linking. Thanks for such an informative post.

Those are important!

These are great tips, thank you for sharing! I will be implementing a lot of these

Super useful! A lot of these are definitely on the to-do for me!

This post is so helpful! Thank you so much for taking time to share this important information with others.

I have been so bad about sharing on social media lately. I think it does make a difference.

Thank you for shining light on such an important, but often confusing, topic. Indexing had always confused me.

I hope this guide made it a bit easier 🙂

This is going to be such a huge help to so many people! Getting Google to index your site is so important, but it can be hard to do.

Just head to Google search console and request for indexing, my dear!

Leanne, there are so many great tips herein. I am absolutely going to need to spend time going through them again but I also signed up for your free course. Thank you.

I’m so happy to hear this, Elise!

I am happy to say that I am about halfway through and I am already seeing proof of my work.

That’s awesome, Elise!! Happy to share a number of intermediate and advanced SEO courses here – https://courses.leannewong.co/ 🙂

So many good tips here. The more we know the better for our sites.

Google is the king when it comes to SEO. Thanks for providing a detailed guide and crash course on indexing and maximizing reach of your website. It ain’t easy especially with the frequent changes that google makes but hey that the name of the game!

SEO is always changing!

Thank you for these tips on getting a site indexed. I will have to have to be more vigilant on my websites!

This is all such useful information! I learned a lot. Thank you for sharing this with your readers.

These are amazing tips, and so informative – thank you SO MUCH for sharing!

Thanks Deborah!

This is very timely as I’m looking for ways to increase search traffic and improve indexing of my new posts.

Yes speeding up indexation for new posts will help get them found faster!

Thanks again for this helpful article. Just a question, in terms of social sharing, what platform would you consider as the most helpful in terms of indexing? On the other hand, I’ve yet to try directories though.

In terms of Google indexing, I think it will try to find every link possible. Submitting XML sitemap and using the ‘Request Indexing’ on Google Search Console helps with that. But for social media – not too sure if any one of the platforms has higher weightage over another for faster indexation though. Any social links should help 🙂

Thanks for the information! I could definitely use it to try to improve my blog.

Cheers 🙂

Thats great information about traffic!I always get trouble with backlink.Thanks for sharing with us

Oh no, hopefully you manage to resolve that!

Wow, thanks for such detailed post to understanding how google works better! I’m bookmarking this to refer back to it!

Happy to hear this 🙂

These are all excellent tips. As a VA, I try to advise my clients on almost all of these. It’s so important to increase visibility wherever possible, whenever possible.

Thank you, Ben!

Google does matter. They are annoying sometimes and their rules can get frustrating, but they are the largest search engine on the planet and getting things to index properly does make a difference.

Absolutely!!